Waiting.

I’m XY Shim. This is my newsletter of fun personal works and behind-the-scenes process of how I work.

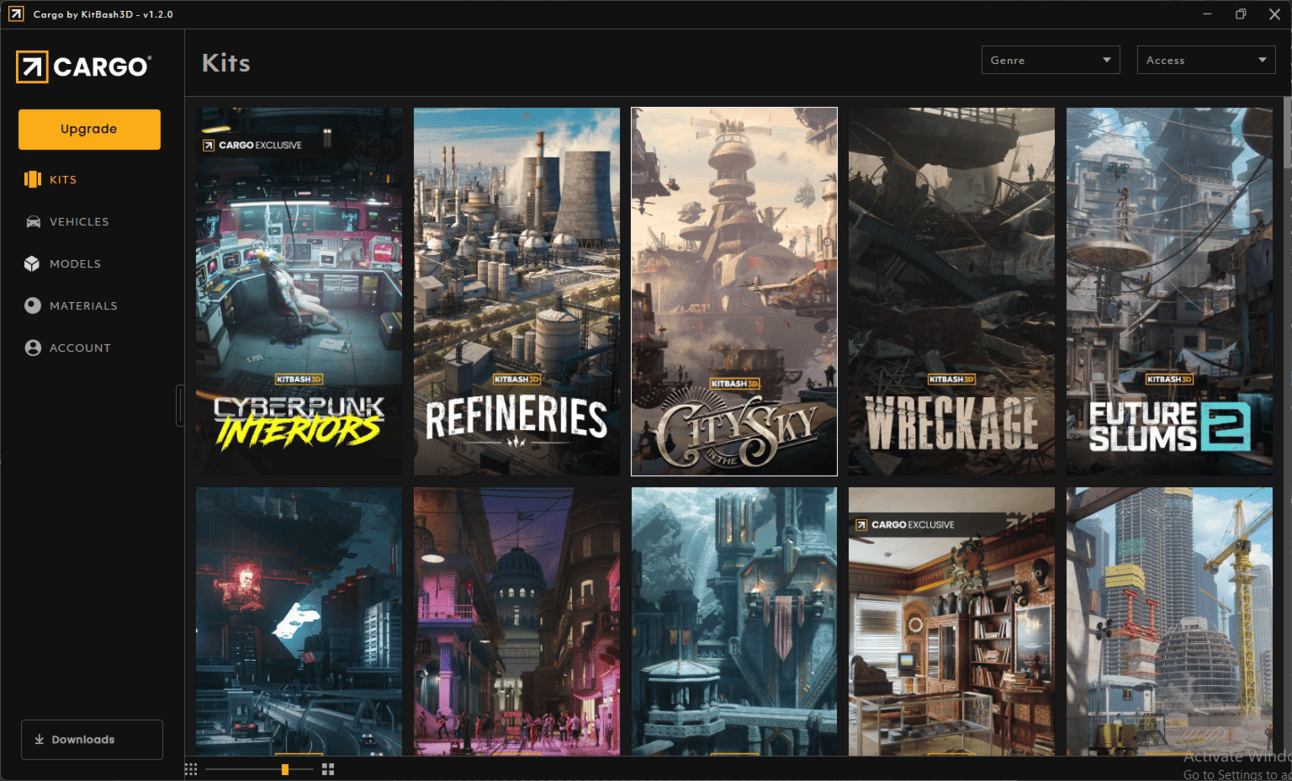

I made this image because I wanted to play around with Kitbash3D’s Cargo.

When I was learning Blender, it was all about learning how to model. I made a bunch of low poly dinosaurs and plants by moving around verts. As a learning project, I even made a book. Actually, a series of books, Dinosaur Fairytales under a different pen name.

Now, I do very little modeling. Mostly, it’s cleaning up and adjusting models to fit into a scene. Now, I feel like I’m mostly scene building. Is that a thing?

I use Blenderkit to import a table or books or a lamp that I can turn into a spaceship. It’s not high end but, it gets the job done.

But Kitbash on the other had, is high end. Their models looks so good.

For this, I was playing around with their free tier models in Cargo.

Research stage

There was no research stage. I just started downloading models in Cargo. The cyberpunk pharmacy looked cool. Maybe this could be a like YA scifi cover?

Sketch in Blender

A lot of time is spent in the scene moving the camera and objects. I’m looking for the right composition. Changing the lighting (I started with daytime, but then changed it to night). I play around with focal length and finally settled on a fish eye lens.

It’s amazing how much information I can process using 3d. If I was only working in 2d, I would spend a lot of time blocking out and doing a rough painting. We were taught to start with quick rough sketches (low fidelity images) and slowly make decisions as we add details and build up to a high fidelity image. But what if we’re wrong and want to go back? Arrggh.. What if I had to change the lighting? That would suck!

But here, I’m starting with a sketch that high fidelity. I have tons of information already here about the lighting, the colors, the composition. I can quickly make decisions and see the results at the beginning of the process. I find this very helpful.

The character herself is a place holder for me. I’m not sure who is she is yet… I was thinking a teen waiting... date night? I find there isn’t much personality in 3d characters. I try to flesh out the characters in the rendering stage.

Rendering stage

I input the above image into Fooocus. I use the JuggernautXL checkpoint model. I start with a high denoising strength around .450-.5 and let the AI be creative with the image. This way, I’ll know if it’s having problems with the text prompt. As my text prompt gets better, I lower the denoising strength so it’s outputs are closer to what I inputted.

For example, I wanted a mixed race character. I was a mixed race child growing up in Seoul. I always felt at home and out of place... Maybe like this character here… She helps run her mother’s Chinese medicine shop, but obviously she’s not 100% Chinese. She’s here at home and also out of place.

But the AI had trouble with prompts like “mixed race, half-black,” because it got muddle with “Chinese cyberpunk shop”. So I settled on “darker skin” and when I got what I wanted, I lower my denoising strength.

When I’m adjusting the denoising strength, I go down to around .333. It doesn’t change too much from my original image. But I have a bunch of images from .45 to .33. I go through them and select parts that I like and think add to the image, especially in the background.

Next I focus on details. I crop out sections and input just that section into Fooocus. The whole image is too complex. But if I crop out sections, it becomes easier to solve using AI.

I could have added a rose in Blender, but I decided to just sketch it:

Let me erase one of those fingers. I have just the tool…

After more tweaking and many more layers:

hmmm. What’s missing?

Clean up and tweaking

I still felt like there needed to be more to the story… A cat. There needs to be a cat. The cat, I added in Blender along with a tin of milk. It’s nice to be able to go back into Blender, make adjustments and see those changes in the rendered piece.

Save the cat! Now we know, she’s the heroine.

For most artist, the last part is the color corrections and tweaking the curves and levels. Make the image pop. But what about changing the lighting in the scene?

You know how I said it would suck to have to change the lighting after so much work has gone into an image? Actually, it doesn’t have to suck anymore. There are AI tools that make relighting an image really easy and much faster than photoshop layer adjustments.

I know of IC light and I tried it with Comfyui, but I find Clipdrop’s relight tool so much more intuitive and controllable. Maybe not as accurate, but I love how easy it is.

You can see how it works here:

I liked the contrast of the green light in the foreground and the blue in the background. I thought it made the character pop in the middle with the green and blue florescent colors surrounding her.

Still waiting…